Whisper Hebrish: A Code-Switching ASR Fine-Tune for English-Hebrew Speech

Published: 18 - Nov - 2025

Originally published on Hugging Face.

I created a personal fine-tune of OpenAI's Whisper (Large Turbo V3) for my own voice, and subsequently developed a public fine-tune called Whisper Hebrish to handle English-Hebrew code-switching — the natural linguistic mixing that occurs when speakers blend two languages mid-sentence.

What Is Code-Switching?

Code-switching means mixing languages mid-sentence in natural speech patterns. English speakers in Israel might say "I'm popping down to the makolet" (grocery store) or "I need to renew my teudat zehut" (ID card). It's natural in multilingual communities — Spanglish, Hebrish, and countless others. It's linguistically authentic, not an error.

The Problem With Standard ASR

ASR models operate on phonetics and token prediction, not explicit language awareness. Specifying a single language code like en optimizes for monolingual data. Code-switching breaks this assumption — accuracy drops dramatically when input mixes languages. Here's what stock Whisper V3 Large Turbo produces:

I went to the Macaulay today to pick up some bread and I also got my Theodette Sahoot.

And here's the Whisper Hebrish output:

I went to the makolet today to pick up some bread, and I also got my teudat zehut.

Synthetic Data Makes This Easy

LLMs excel at generating synthetic training data for ASR fine-tuning. I used Claude to generate 500 Hebrew/English words and phrases commonly used by English-speaking Israeli residents, selected the subset that Whisper struggled with, then created sentence-level training data around those terms. Claude also generated a GUI tool for efficient recording.

The recording requirements are simple: use a decent microphone, keep samples under 30 seconds, and speak naturally. Pair audio files with sentence transcripts in JSON array format, and you have a dataset.

From Dataset to Fine-Tune

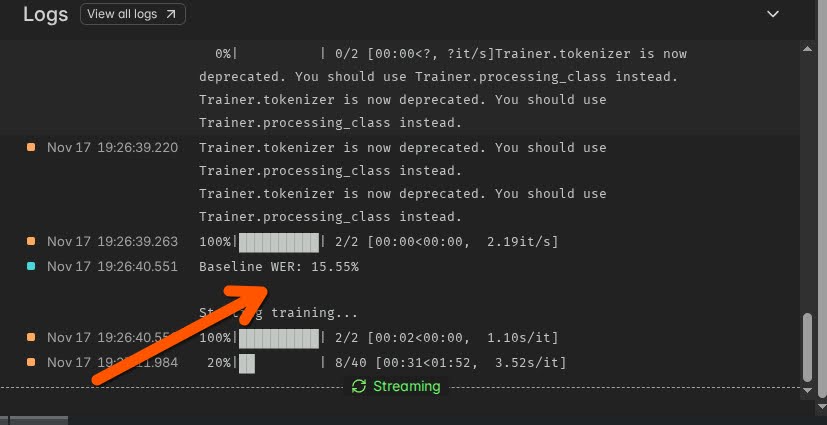

Training was conducted on Whisper Large V3 Turbo using a Modal A100 GPU. I created both a private personal model and the public code-switching model. Whisper Hebrish is available on the Hugging Face Model Hub and on Replicate for serverless inference. There's also an interactive demo you can try.

Real-world results

Fine-tuning ASR models really works. My personal fine-tune outperformed commercial models and stock Whisper API, with improvements measurable via Word Error Rate (WER). I voice-type about 2 hours daily, and the local model consistently outperforms all alternatives.

More importantly, this makes speech-to-text genuinely usable for multilingual daily life. It demonstrates that ASR fine-tuning is accessible — not limited to medical or legal domain specialists. Even ordinary speech benefits from personalization, and anyone can build and deploy custom ASR models using accessible tools.

The dataset is published at huggingface.co/datasets/danielrosehill/English-Hebrew-Mixed-Sentences.

Daniel Rosehill

AI developer and technologist specializing in AI systems, workflow orchestration, and automation. Specific interests include agentic AI, workflows, MCP, STT and ASR, and multimodal AI.