I've been working with code generation LLMs daily for over a year now — Windsurf, Aider, Claude Code, Gemini, Open Hands, Qwen Code, and whatever else shows up on Hacker News that week — and I've arrived at a conclusion that sits uncomfortably between two camps. The vibe coding evangelists are right that these tools are transformative; I've watched Sonnet migrate an entire MKDocs site to Astro in about ten minutes, a task that would have taken me days. But the critics are also right that there's something deeply troubling about a future where most people can conjure software into existence without understanding what it does or how it works. My latest project, Vibe Code and Learn, is my attempt to square that circle: a framework where AI code generation becomes a conduit to structured learning rather than a replacement for it.

danielrosehill/Vibe-Code-And-Learn View on GitHubThe feudalism scenario that keeps me up at night

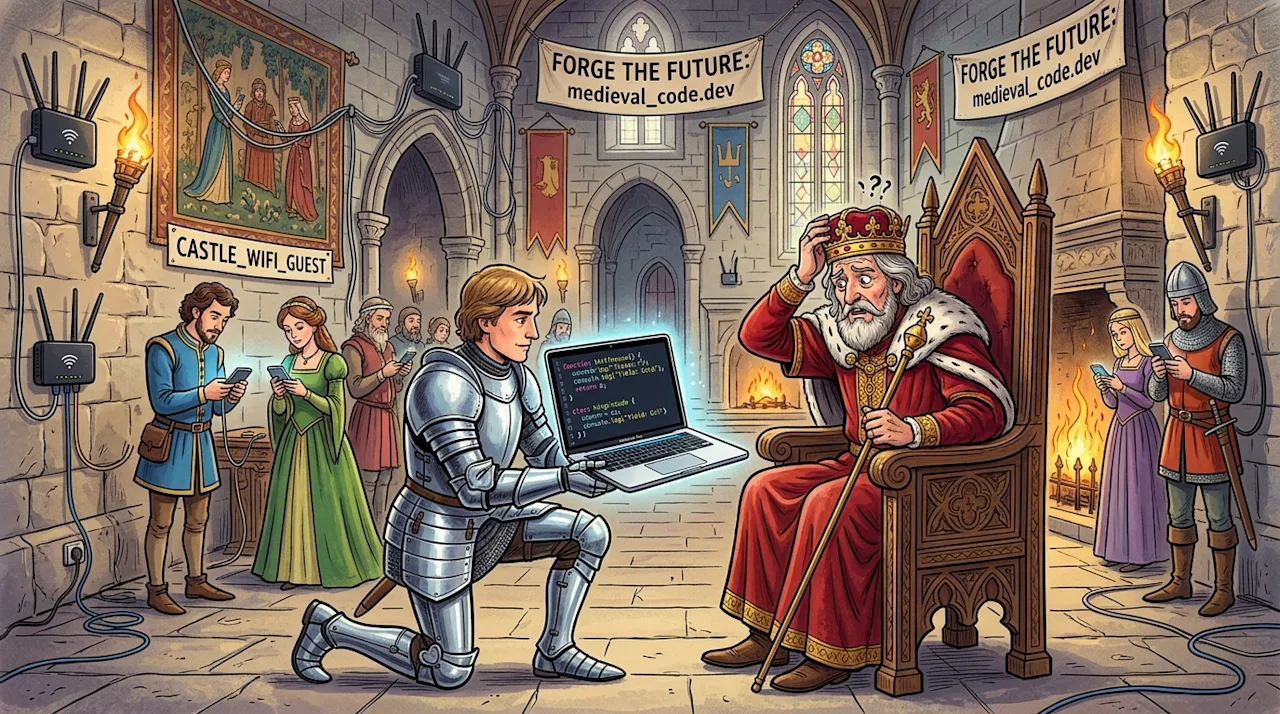

Here's the scenario that genuinely worries me, and I don't think it's hyperbolic. Imagine a world five years from now where a tiny priesthood of people understand how software actually works — the algorithms, the data structures, the architectural patterns, the security implications — and they bake that knowledge into increasingly powerful AI tools. Meanwhile, the vast majority of people who interact with software daily can operate these tools but have no real understanding of what's happening under the hood. They can prompt an AI to build them an app, but they can't evaluate whether the app is secure, efficient, maintainable, or even doing what they think it's doing. That's not democratisation; it's a new kind of feudalism where the lords write the models and the serfs write the prompts. For anyone who values the open source ecosystem and the idea that technology should empower rather than infantilise, that would be an incredibly retrograde evolution.

I'm not being precious about this. I'm not a professional developer — I'm a tech communications specialist who codes enough to be dangerous and who has burned large chunks of cash on wasted API credits trying to get AI to solve problems I could have handled myself in five minutes, if only I'd understood the codebase better. The reality of working with these tools daily is complicated in ways that neither the evangelists nor the doomers capture. Sometimes the AI saves you days of work. Sometimes it sends you down a rabbit hole that costs more in time and money than the manual approach would have. The variable that determines which outcome you get is almost always how well you understand what you're asking the AI to do — which is exactly the kind of understanding that pure vibe coding erodes.

Education in reverse: do the interesting thing first

The Vibe Code and Learn framework uses Claude Code subagents organised into two groups that work in tandem. The Doer agents handle the actual coding work — planning, code creation, debugging, the stuff you'd expect. But alongside them, a parallel team of Educator agents watches what's happening and builds a personalised curriculum from it. A Curriculum Writer maps the skills being used during each coding session into an evolving learning plan. A Lesson Writer creates personalised lessons using your actual code as examples — not abstract textbook exercises, but explanations of what just happened in the project you're actually building. A Teacher delivers interactive educational content when you're ready for it. And a Session Summary Agent ties it all together by recording what happened during each session so the curriculum stays current.

The key insight — and the thing I find most exciting about this model — is that the curriculum builds organically from what you're actually working on. Traditional programming education follows a syllabus designed by someone else: here are variables, here are loops, here are functions, here's object-oriented programming, and by the way here's a contrived exercise involving a zoo animal hierarchy that nobody has ever needed to build in production. The Vibe Code and Learn model flips this completely. You build the thing you actually want to build — the interesting thing, the thing that motivated you to open the terminal in the first place — and the educational layer extracts the underlying concepts afterwards. It's education in reverse, and I suspect it maps much better to how adults actually learn: through motivated, contextual engagement rather than abstract prerequisites.

Why I'm still optimistic, despite the valid criticism

Despite everything I've said about the feudalism risk, I think vibe coding might be the most exciting advance in technology I've lived through. The tools will get better — tooling like Context7 and purpose-built coding LLMs are already addressing the current limitations around hallucination and context management. As the capability ceiling rises, the educational potential becomes more pronounced, not less. A model that can reliably write correct code can also reliably explain that code, identify the patterns it's using, and generate exercises that reinforce the underlying concepts. The question isn't whether AI will transform how we learn to code — it already is, whether intentionally or not. The question is whether we build systems that capture that educational potential deliberately, or whether we let it go to waste in favour of pure output maximisation. I'm betting on deliberate, and this framework is my attempt to show what that could look like.