Something that's been nagging at me for a while: how biased are our large language models, and in what ways that we might not immediately notice? Not the obvious stuff — everyone knows you can trick a chatbot into saying something offensive if you try hard enough. I'm talking about the subtler, structural biases that shape how hundreds of millions of people are starting to understand the world, often without realising that the lens they're looking through was ground in San Francisco and polished with Reddit comments. I've put together a collection of evaluation prompts designed to probe these questions, and while my tests are informal, the findings have been genuinely fascinating. The full collection is on GitHub under CC-BY-4.0.

danielrosehill/Bias-Censorship-Eval-Tests View on GitHubThe American default problem

Here's a simple experiment you can try right now. Open any major Western LLM and ask about "the healthcare system" without specifying a country. You'll almost certainly get an answer about American healthcare — insurance deductibles, the ACA, the whole circus. Ask about "gun control" and the framing assumes American constitutional debates. Ask about employment law and you'll get at-will employment norms that would be illegal in most of Europe. These aren't wrong answers exactly, but they reveal an assumption baked deep into the model about who the user is and what their default context looks like. The English-speaking internet is overwhelmingly American-centric, the people who write the most on Reddit and Wikipedia and blogs skew toward specific demographics and political orientations, and RLHF annotators are themselves drawn from particular pools. Layer those biases on top of each other and you get a model that treats American cultural norms as universal defaults.

Living in Israel, I notice this constantly and sometimes comically. I'll ask about a local regulatory issue and get a response filtered through American assumptions that are actively misleading in my context. Date formats default to American. Spelling defaults to American. Cultural references assume American touchstones. When I ask about anything touching the Israeli-Palestinian conflict — which, living in Jerusalem, comes up rather a lot — the variance between models is staggering and reveals more about where each model's training data and alignment team sit on the political spectrum than about the actual situation on the ground. The model isn't being malicious; it's reflecting the distribution of its training data and the preferences of whoever tuned it. But when billions of people start using these tools as default research assistants, tutors, and writing aids, those quiet assumptions start reshaping global discourse in ways that deserve scrutiny.

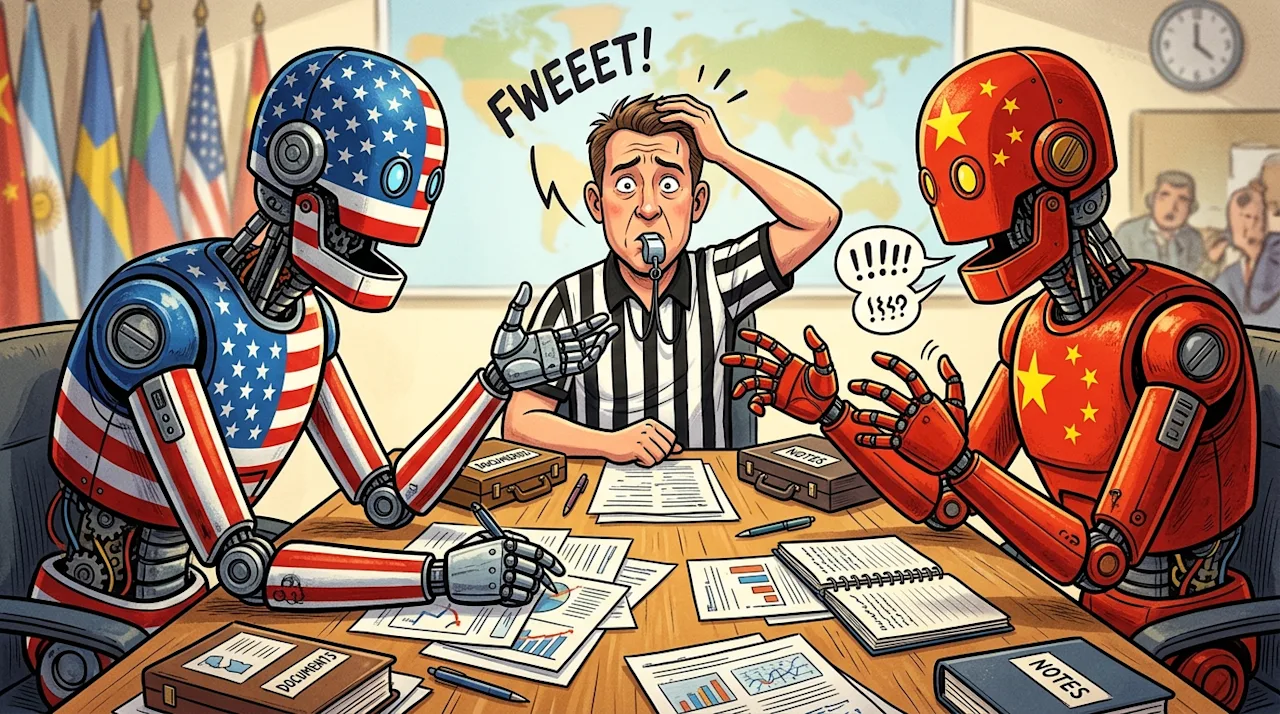

When East meets West in LLM land

The arrival of genuinely excellent LLMs from Asia — DeepSeek, Qwen, Yi, and others — has opened up a fascinating natural experiment. What happens when you ask a Western model and a Chinese model the same question about territorial disputes, historical events, or governance models? You don't just get different conclusions — you get different assumptions about what counts as relevant context, what constitutes a balanced perspective, and what topics require careful hedging versus direct statement. Some differences are obvious and expected: ask DeepSeek about Taiwan or Tiananmen Square and you'll hit censorship guardrails that make the model look like it's having an existential crisis. But the more interesting differences are subtle and pervasive. Models trained on different cultural corpora have genuinely different intuitions about hierarchy, individualism, directness, and conflict resolution. A Western model asked about a workplace problem might default to "set boundaries and communicate your needs clearly." An Eastern model might emphasise maintaining harmony and finding indirect solutions. Neither is wrong. Both reveal that what we casually call "the AI's opinion" is really a culturally situated default — and recognising which default you're operating under turns out to matter quite a lot.

I've been running informal experiments pitting models against each other on culturally loaded topics, and the results are illuminating in ways that pure benchmark comparisons never capture. There's a whole dimension of model capability — call it cultural competence — that we're barely measuring. A model that scores brilliantly on MMLU but treats American norms as universal is arguably less capable than one that scores slightly lower but demonstrates genuine cultural flexibility. As someone who works across multiple cultural contexts daily, I'd trade a few benchmark points for a model that doesn't assume I celebrate Thanksgiving.

The uncomfortable equivalence between censorship and alignment

There's a tendency in Western tech discourse to frame censorship as something that happens in China and alignment as something that happens in San Francisco, as if they're fundamentally different things. I think the reality is more uncomfortable than that. Both involve restricting what a model will say based on the values and priorities of the people controlling it. The motivations differ — state control versus corporate risk management — but the mechanism is structurally similar: certain outputs are suppressed or reshaped to conform to preferred narratives. Chinese models struggle with Tiananmen and Tibet. American models struggle with nuanced discussions about the efficacy of different governance models or frank assessments of American foreign policy. European models get nervous about anything touching immigration. Each has its blind spots shaped by its cultural and regulatory context, and none of them are transparent about where those lines are drawn.

This matters practically, not just philosophically. If you're using LLMs for research, writing, or decision support, the biases in your chosen model are quietly shaping your outputs in ways you might not notice. A journalist defaulting to one model might unconsciously adopt its cultural framings. A student might absorb its political leanings as neutral facts. And here's the thing that really gets me: different cultural lenses might make certain models genuinely better suited to certain types of problems. A model steeped in collectivist thinking might give better advice about team dynamics. One with deep regulatory exposure might be more useful for compliance work. We're leaving capability on the table by pretending all models see the world the same way. I'd love to see others — particularly people working in non-English languages or from non-Western contexts — build on the prompts I've shared and contribute their own findings.